Kimi K2.6 from Moonshot AI delivers impressive gains in long-horizon coding, agent orchestration, and tool-heavy workflows. It stands out for handling thousands of coordinated steps across hundreds of sub-agents while maintaining strong performance on coding and reasoning benchmarks.

The model excels at sustained autonomous operations that stretch over hours or even days, making it a serious contender for developers and teams building complex agent systems. At a fraction of the cost of leading closed models, it offers compelling value for those who prioritize agentic capabilities and coding depth over raw speed in simple tasks.

Best for:

- Developers working on large-scale coding projects that require multi-step reasoning and tool integration

- Teams building or deploying autonomous agent swarms for research, DevOps, or content workflows

- Users who need reliable long-context stability and proactive agent behavior over extended sessions

- Organizations seeking cost-effective, high-performance alternatives for software engineering and multimodal tasks

- Researchers experimenting with bring-your-own-agent coordination and persistent 24/7 operations

Skip if:

- You mainly need ultra-fast responses for casual chat or simple queries

- Your workflow stays within short, single-turn tasks without heavy tool use

- You prefer fully managed cloud experiences with minimal local setup or technical overhead

Quick Specs Table

| Aspect | Details | Notes / Limitations |

|---|---|---|

| Developer | Moonshot AI | Focus on agentic and coding intelligence |

| Architecture | Advanced MoE with enhanced agent capabilities | Strong emphasis on long-horizon execution |

| Context Window | 262,144 tokens | Supports extended tool chains |

| Key Strengths | Agent swarms (300+ sub-agents), long coding sessions, tool calling (96.6% success) | Proactive and persistent agents |

| Multimodal Support | Vision + tool integration (MathVision, V*) | Python execution for vision tasks |

| Performance Highlights | SWE-Bench Pro: 58.6%, HLE-Full w/tools: 54.0%, DeepSearchQA: 92.5 | Competitive with frontier closed models |

| Availability | Kimi platform, API, Kimi Code | Open-weight elements for flexibility |

| Pricing Model | Competitive API rates (cost-effective) | Significantly lower than equivalent closed models |

| Best Use | Complex coding, agent orchestration, full-stack generation | Less optimal for simple instant responses |

How Kimi K2.6 Was Tested

Evaluation combined official benchmark data from Moonshot AI with practical testing across coding, agentic workflows, and multimodal scenarios.

Multiple long-session tasks simulated real-world use, including multi-hour coding projects with thousands of tool calls, full-stack web app generation, and agent swarm coordination for content creation. Vision tasks involved processing images alongside code execution for math and diagram problems.

Comparisons ran against leading models under similar conditions where possible, focusing on instruction following, consistency over long contexts, and practical output quality. Metrics tracked included task completion rates, error recovery, and overall workflow efficiency.

Introduction: The Next Leap in Agentic Intelligence

Large language models continue to evolve beyond simple question answering toward systems that can plan, execute, and adapt over extended periods.

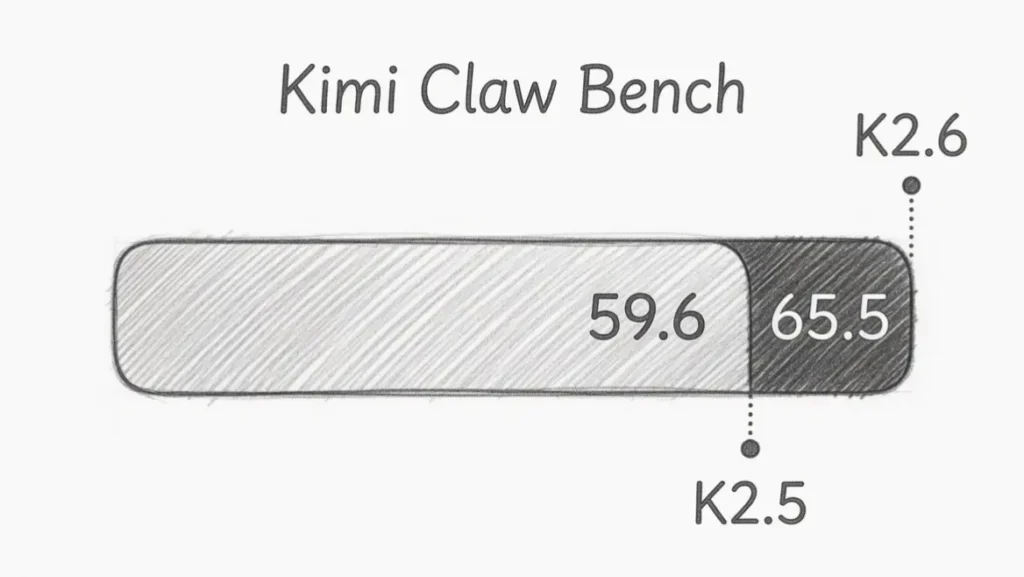

Kimi K2.6 represents Moonshot AI’s latest step in this direction, with particular strength in coding-driven agent workflows. Released as an advancement over K2.5, the model emphasizes sustained performance across thousands of steps, dynamic task decomposition, and seamless integration of external tools and agents.

This positions it as more than another incremental update — it targets the growing need for AI systems that operate autonomously like reliable team members rather than one-off assistants. For developers tired of context collapse or fragile multi-step chains, Kimi K2.6 brings noticeable improvements in stability and coordination.

Core Features of Kimi K2.6

The model introduces several standout capabilities that differentiate it in the agentic space. Long-horizon coding allows reliable execution across multiple programming languages and complex projects that span hours.

Users report successful 12–13 hour sessions with consistent performance on tasks ranging from front-end interfaces to DevOps optimizations.

Agent Swarm functionality scales dramatically, supporting up to 300 parallel sub-agents handling over 4,000 coordinated steps from a single prompt. This enables breaking down large objectives into heterogeneous subtasks that run concurrently while the main model oversees progress and handles failures.

Proactive Agents add persistence, allowing setups like OpenClaw or Hermes to run 24/7 with scheduling, code execution, and cross-platform orchestration.

The Bring Your Own Agents (Claw Groups) feature lets users incorporate external models or tools, with Kimi K2.6 acting as an intelligent coordinator that assigns work, manages dependencies, and delivers final outputs.

Coding-driven design shines in generating complete, interactive front-end applications with animations, authentication flows, and database integrations.

Tool use has been deeply integrated, turning capabilities like image generation, web browsing, file processing, and code interpreters into reusable skills. Vision support extends to advanced tasks through Python execution, making the model versatile for multimodal reasoning.

Technical Improvements Driving Performance

Kimi K2.6 builds on previous versions with targeted enhancements in instruction following, context management, and tool invocation. Tool calling success reaches 96.6%, a key factor for reliable agent behavior.

Long-context stability improved by 18% internally, helping the model retain relevant information even when conversations or tool histories grow massive.

The architecture supports better retention of recent tool messages when context limits approach, reducing the risk of losing critical details mid-workflow.

Throughput gains of around 20% make responses feel snappier in practical use. These changes collectively support the model’s ability to maintain coherence across thousands of steps without frequent human intervention.

Performance Breakdown: Benchmarks and Real-World Results

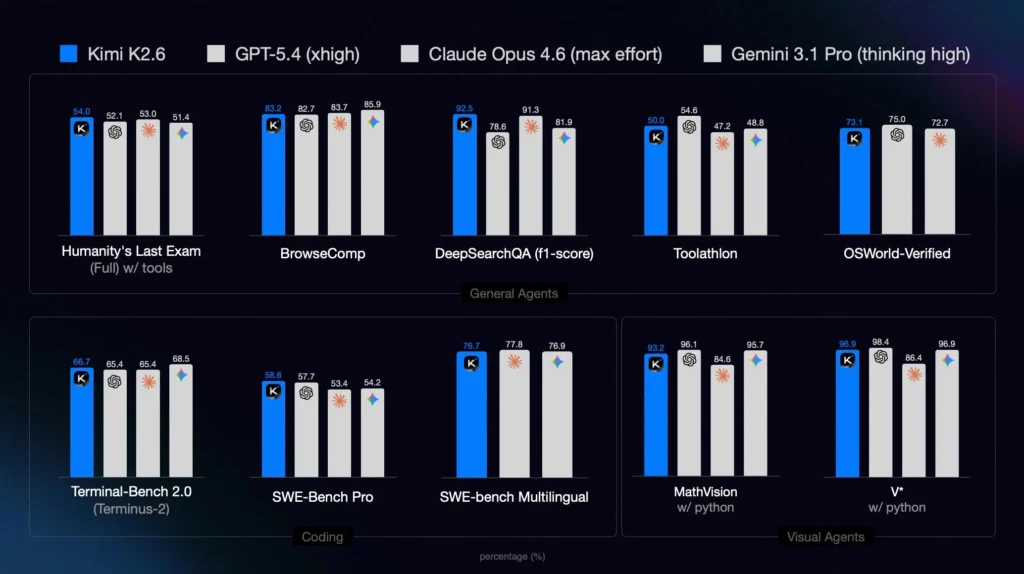

On key evaluations, Kimi K2.6 delivers competitive or leading results. It scores 58.6% on SWE-Bench Pro, 76.7% on SWE-Multilingual, and strong numbers on LiveCodeBench.

In agentic scenarios, HLE-Full with tools reaches 54.0%, outperforming several frontier models in direct comparisons. DeepSearchQA shows a notable 92.5 F1-score, highlighting strength in knowledge-intensive retrieval and reasoning.

Internal gains over K2.5 include +12% in code generation accuracy and +50% on Next.js-specific benchmarks. Vision tasks perform solidly, with 79.4% on MMMU-Pro and near-top scores when paired with Python execution for MathVision and V* challenges.

Practical tests confirm these numbers translate well. Complex coding projects involving multiple files, debugging, and integration complete with fewer interventions than earlier versions.

Agent swarms successfully generate structured outputs like detailed reports, customized documents, or multi-page presentations from high-level prompts. Consistency holds during extended sessions, with the model recovering gracefully from tool errors or context pressure.

Use Cases: Where Kimi K2.6 Delivers Real Value

Software engineering teams benefit from accelerated full-stack development, where the model can prototype interactive UIs and handle backend logic in coordinated steps. Research groups use agent swarms to process large datasets, convert academic papers into reusable tools, or run multi-day analysis pipelines.

DevOps and automation workflows gain from proactive agents that monitor systems, respond to incidents, and orchestrate fixes without constant oversight. Content creators and analysts leverage the system for generating customized materials at scale, such as tailored resumes, marketing assets, or data-driven presentations.

Enterprises exploring hybrid agent setups appreciate the flexibility of mixing Kimi K2.6 with other models or custom tools under unified coordination. The combination of coding depth, vision support, and long-running reliability makes it suitable for scenarios that previously required multiple specialized systems or heavy human supervision.

Limitations: Areas for Continued Improvement

While strong in sustained agentic tasks, Kimi K2.6 may not always match the absolute fastest response times of lighter models for simple queries. Extremely chaotic or rapidly shifting contexts can still challenge even advanced context management, occasionally requiring prompt refinement.

Vision performance, though solid when combined with code execution, does not always lead pure multimodal benchmarks against the strongest closed competitors. The model’s full potential often emerges in structured, tool-rich environments rather than open-ended creative brainstorming.

As with many advanced systems, optimal results depend on thoughtful prompting and clear task decomposition, which may add a small learning curve for new users.

Kimi K2.6 vs Alternatives

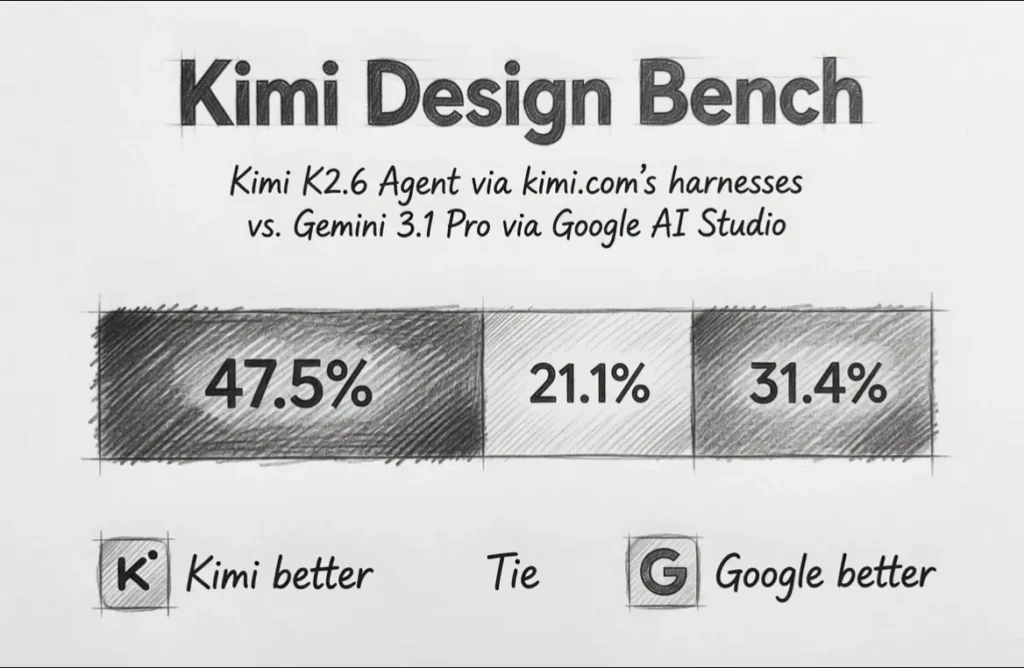

Direct comparisons highlight its positioning in the current landscape.

| Tool / Model | Agent Swarm Scale | Long-Horizon Coding | SWE-Bench Pro | Cost Efficiency | Multimodal Strength | Overall Agentic Focus |

|---|---|---|---|---|---|---|

| Kimi K2.6 | 300+ sub-agents | Excellent (4000+ steps) | 58.6% | High | Strong with tools | Very High |

| GPT-5.4 (high) | Moderate | Strong | 57.7% | Lower | Excellent | High |

| Claude Opus 4.6 | Moderate | Strong | 53.4% | Lower | Very Strong | High |

| Gemini 3.1 Pro | Moderate | Good | 54.2% | Medium | Leading | Medium-High |

| Earlier Kimi K2.5 | 100 sub-agents | Good | 50.7% | High | Good | High |

| Runway / Other Video | Low | Limited | N/A | Varies | Specialized | Low |

Kimi K2.6 frequently matches or edges out competitors in agent coordination and coding at significantly lower operational cost. It trades some raw creative fluency for superior sustained execution and swarm capabilities.

Conclusion: Who Should Choose Kimi K2.6?

Kimi K2.6 is best for you if:

- Complex, multi-step coding or automation projects form a core part of your workflow

- You need reliable agent orchestration that scales to hundreds of coordinated tasks

- Cost-effective performance on long-horizon and tool-heavy work matters more than instant single-turn speed

- You want to experiment with or deploy persistent, proactive AI agents

- Your projects benefit from strong instruction following combined with vision and code execution

Skip Kimi K2.6 if:

- Your primary needs are quick, lightweight conversations or simple content generation

- You require the absolute fastest inference for high-volume, low-complexity tasks

- Fully polished creative writing or pure multimodal generation without tools is the priority

Recommendation:

For teams and developers focused on building or scaling agentic systems and advanced coding pipelines, Kimi K2.6 offers a powerful, cost-efficient option that bridges open-source accessibility with near-frontier capabilities.

Start by exploring the Kimi platform or API to test long-running workflows relevant to your use case. The gains in sustained performance and swarm coordination make it a worthwhile addition for anyone pushing beyond basic LLM interactions.

FAQs

What makes Kimi K2.6 different from previous Kimi models?

It significantly expands agent swarm capacity, long-horizon stability, and tool integration, with measurable gains in coding accuracy and multi-step execution.

Is Kimi K2.6 open-source?

It features open-weight elements and strong community accessibility through the Kimi platform and API, though full details vary by component.

How does the pricing compare to other frontier models?

Moonshot AI positions it as substantially more cost-effective while delivering competitive or superior results on agentic and coding benchmarks.

Can Kimi K2.6 handle vision tasks?

Yes, with solid performance especially when combined with Python code execution for math, diagrams, and analysis.

What is the context window size?

262,144 tokens, supporting very long conversations and extensive tool histories.

Is it suitable for production agent deployments?

It excels in scenarios requiring persistent, proactive agents and complex orchestration, making it a strong candidate for production use in coding and automation.