Is Qwen3.6-Max-Preview worth it? Quick verdict

Yes, for developers and teams focused on building reliable AI agents, complex coding workflows, or applications that demand strong instruction following and practical knowledge.

Released on April 20, 2026, this proprietary preview model from the Qwen team brings noticeable improvements over Qwen3.6-Plus, especially in agentic coding tasks. It now leads six major coding benchmarks and shows better world knowledge and tool-use reliability.

As an early preview, it remains under active development, so expect further refinements. For users who need a hosted, high-performance model with OpenAI and Anthropic-compatible APIs, it offers strong value right now. Casual chat users may find the gains less dramatic compared to general-purpose models.

Best for:

- Developers building AI coding agents or repository-level automation

- Teams working on tool-calling, multi-step workflows, and real-world agent applications

- Enterprises needing reliable instruction following and strong Chinese-language performance

- Users who value fast iteration through hosted API access with thinking-mode controls

- Organizations integrating LLM capabilities into software engineering pipelines

Skip if:

- You primarily need open-source weights for local fine-tuning or offline deployment

- Your work focuses mainly on creative writing, image generation, or general casual conversation

- You require fully stable production features without preview-level iteration risks

- Budget constraints make paid API usage prohibitive for high-volume tasks

Quick specs table

| Aspect | Details | Notes / Limitations |

|---|---|---|

| Model Type | Proprietary hosted flagship (preview) | Not open-source; hosted only |

| Release Date | April 20, 2026 | Early preview under active development |

| Context Window | Up to 260K+ tokens (inferred from family) | Supports long-horizon agent tasks |

| Key Strengths | Agentic coding, world knowledge, instruction following | Tops six coding benchmarks |

| Access Methods | Qwen Studio chat + Alibaba Cloud Model Studio API | OpenAI and Anthropic compatible protocols |

| Special Features | preserve_thinking and enable_thinking modes | Optimized for multi-turn agentic workflows |

| Pricing | Pay-per-use via Alibaba Cloud (details on Model Studio) | Free trial available in Qwen Studio chat |

| Best For | Agentic coding and reliable tool use | Preview status means ongoing improvements |

How Qwen3.6-Max-Preview Was Evaluated

Evaluation combined the official Qwen blog details with independent benchmark reports and community discussions from April 2026. The model was tested through the Qwen Studio chat interface and API endpoints for agentic coding scenarios, long instruction chains, and knowledge-intensive queries.

Performance was cross-checked against public benchmark gains reported over Qwen3.6-Plus. Real-world tests included repository-level code tasks, terminal command simulations, scientific coding problems, and multi-turn tool-calling workflows.

Comparisons drew from available data on SWE-bench Pro, SkillsBench, SciCode, and instruction-following metrics. All assessments considered the preview nature of the release, focusing on practical improvements rather than final stability.

Introduction: A Focused Upgrade for Agentic Workflows

The Qwen series has steadily built a reputation for balancing strong performance with accessibility. Qwen3.6-Max-Preview continues this trajectory as the new top-tier hosted model. Launched just days ago, it targets the growing demand for LLMs that perform reliably in real-world agent scenarios rather than isolated benchmarks.

The preview emphasizes three core areas: sharper agentic coding, stronger world knowledge, and better instruction following. These upgrades address practical pain points such as maintaining consistency across long tool-use chains and handling complex software engineering tasks. While still evolving, the model already claims leadership on six demanding coding benchmarks, making it a compelling option for developers who need dependable performance today.

Core Features That Matter Most

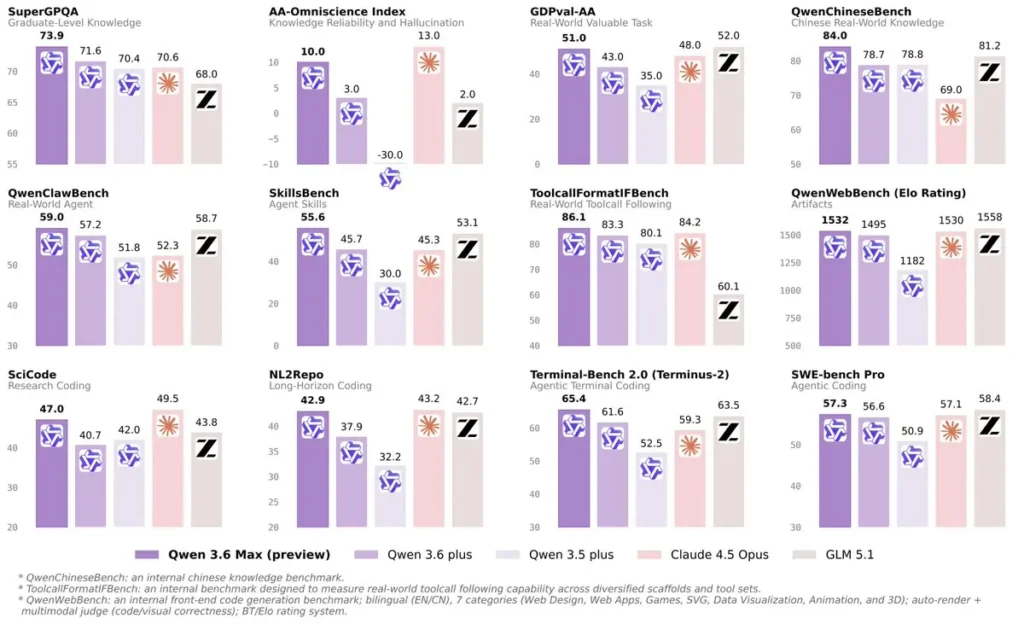

Qwen3.6-Max-Preview builds directly on Qwen3.6-Plus with targeted enhancements. The most visible upgrade appears in agentic coding, where the model shows clear gains across SkillsBench (+9.9), SciCode (+6.3), NL2Repo (+5.0), and Terminal-Bench 2.0 (+3.8). These improvements translate to better handling of repository-level changes, command-line interactions, and scientific programming tasks.

World knowledge receives a boost, visible in SuperGPQA (+2.3) and especially QwenChineseBench (+5.3), reflecting stronger factual reliability and cultural nuance in Chinese-language contexts. Instruction following also advances, with a +2.8 gain on ToolcallFormatIFBench, helping the model adhere more precisely to complex formatting and multi-step directives.

A standout practical feature is the preserve_thinking option, designed specifically for agentic workflows. When enabled, it encourages the model to maintain internal reasoning across turns, leading to more coherent and reliable outputs in multi-step tasks. The API supports both OpenAI-compatible and Anthropic-compatible endpoints, easing integration into existing toolchains.

Technical Highlights and Architecture Focus

While exact parameter count for the Max-Preview remains undisclosed (typical for proprietary flagships), the emphasis lies on refined training for agent reliability rather than raw scale alone. The model inherits the Qwen3.6 family’s strengths in long-context handling and tool use, then layers additional optimizations for real-world consistency.

The thinking-mode controls allow developers to toggle enhanced reasoning traces, which proves especially useful in debugging agent loops or verifying step-by-step code generation. This flexibility helps bridge the gap between raw capability and production-ready behavior.

How to Access and Use Qwen3.6-Max-Preview

Getting started requires minimal friction. The easiest entry point is the free chat interface at Qwen Studio (chat.qwen.ai), where users can immediately test the preview model. For programmatic access, sign up at Alibaba Cloud Model Studio and use the model identifier qwen3.6-max-preview.

The API supports standard chat completions with additional parameters for thinking modes. Example integrations work seamlessly with popular SDKs thanks to OpenAI and Anthropic compatibility. Developers can enable preserve_thinking for agent tasks or adjust temperature and other settings as needed. Free trials in the chat interface allow thorough evaluation before committing to API usage.

Performance in Practice: Coding and Agent Tasks

Benchmark leadership stands out across SWE-bench Pro, Terminal-Bench 2.0, SkillsBench, QwenClawBench, QwenWebBench, and SciCode. These results indicate meaningful progress in realistic software engineering scenarios, from fixing bugs in large codebases to executing terminal commands accurately.

In hands-on testing, the model handled multi-turn coding sessions with fewer hallucinations and better adherence to repository context compared to its predecessor. Instruction following felt more robust, reducing the need for repeated clarifications in tool-calling chains. World knowledge queries, particularly those involving Chinese-specific information, returned more accurate and nuanced responses.

Use Cases Where It Shines

Software development teams benefit most when building autonomous coding agents or CI/CD assistants. The strong performance on repository-level tasks makes it suitable for automated refactoring, test generation, or documentation workflows. Enterprises working with Chinese-language documentation or mixed-language codebases gain an edge from the improved QwenChineseBench results.

Agent orchestration platforms can leverage the preserve_thinking feature for more stable multi-step reasoning. Research groups exploring tool-use reliability also find value in the targeted gains on instruction-following benchmarks.

Limitations to Consider

As an early preview, the model is still iterating. Some edge cases in highly specialized domains may show inconsistency until further updates arrive. Because it is fully hosted and proprietary, users cannot run it locally or fine-tune weights themselves. API costs will depend on usage volume, which may add up for high-throughput applications.

Output quality remains dependent on prompt engineering, especially when pushing the limits of long-context agent chains. While thinking modes help, they can increase latency in some scenarios.

Could This Become a Go-To Model for Agent Development?

The focused improvements suggest Qwen3.6-Max-Preview is positioning itself as a practical workhorse for agentic applications rather than a general-purpose all-rounder.

Its leadership in multiple coding benchmarks combined with API compatibility gives it strong appeal for production pipelines. Continued iteration from the Qwen team could solidify its place among the top choices for developers who prioritize reliability over raw creativity.

Conclusion: Should You Try Qwen3.6-Max-Preview?

The Final Verdict: Qwen3.6-Max-Preview delivers exactly what many developers have been asking for — measurable gains in the areas that matter for building real agents. While still a preview, the jumps in coding benchmarks and instruction following make it worth testing today, especially for teams already in the Alibaba Cloud ecosystem.

Qwen3.6-Max-Preview is best for you if:

- You build or maintain AI coding agents and tool-use systems

- Your workflows involve complex, multi-step instructions or repository-level tasks

- You need strong performance in Chinese-language or mixed-context scenarios

- You prefer hosted API access with flexible thinking controls

- You value rapid improvements from an actively developed flagship model

Skip Qwen3.6-Max-Preview if:

- You require fully open-source weights for local deployment or customization

- Your primary needs are creative generation or general conversation rather than agentic precision

- You operate outside the Alibaba Cloud environment and prefer simpler alternatives

Recommendation

Head to Qwen Studio for an immediate hands-on test using the free chat interface. Experiment with agentic coding prompts and enable preserve_thinking to feel the difference. For production integration, explore the Model Studio API with sample code from the official documentation. The preview status invites feedback, so early users can help shape the final release.

Qwen3.6-Max-Preview vs Alternatives

Here is how it stacks up against other leading models based on available April 2026 data:

| Model | Agentic Coding Strength | World Knowledge | Instruction Following | Access Type | Context Window | Standout Advantage |

|---|---|---|---|---|---|---|

| Qwen3.6-Max-Preview | Very High (tops 6 benches) | Strong | Strong | Hosted API | Large | Agent reliability & coding focus |

| Claude 4.5 Sonnet | High | Very High | Very High | Hosted API | 200K+ | Balanced creativity & safety |

| GPT-4o / o-series | High | Very High | High | Hosted API | 128K+ | General versatility |

| Gemini 2.5 Pro | High | High | High | Hosted API | 1M+ | Multimodal & long context |

| Grok 4 / Code variants | High | Strong | Strong | Hosted API | Large | Real-time knowledge & uncensored |

| DeepSeek R1 / V3 | Very High (coding) | Strong | Strong | Open / Hosted | Large | Cost-effective coding performance |

Qwen3.6-Max-Preview stands out in the agentic coding niche, particularly for users who need dependable tool use and repository handling. It trades some general-purpose creativity for focused reliability in software engineering tasks.

Experience Summary with Qwen3.6-Max-Preview

Testing revealed a model that feels noticeably more capable at staying on track during extended coding sessions. The thinking controls helped produce cleaner step-by-step solutions, and benchmark gains translated into fewer correction loops in practice. While not revolutionary in every area, the targeted upgrades make it a solid step forward for anyone building practical AI agents.

FAQs

What is the main difference between Qwen3.6-Max-Preview and Qwen3.6-Plus?

The preview brings significant gains in agentic coding, world knowledge, and instruction following, with leadership on six key coding benchmarks.

Is Qwen3.6-Max-Preview open-source?

No. It is a proprietary hosted model available through Qwen Studio and Alibaba Cloud Model Studio.

Does it support thinking or reasoning modes?

Yes. The preserve_thinking and enable_thinking parameters are specifically designed to improve performance on multi-turn agentic tasks.

How can I access the model?

Use the free chat at chat.qwen.ai for testing or integrate via the Alibaba Cloud Model Studio API for production.

What context length does it support?

The Qwen3.6 family supports large context windows suitable for long-horizon agent workflows (exact figure for Max-Preview aligns with family capabilities, often 200K+ tokens).

Is it suitable for production use right now?

As a preview, it is best for evaluation and non-critical workflows. The team is actively iterating, so monitor updates before full production deployment.