Is LazyPredict vs PyCaret worth it? Quick verdict

LazyPredict delivers the fastest way to test dozens of models on any dataset with almost zero code. Its March 2026 update added serious time-series forecasting capabilities, making it the go-to choice when you need instant model rankings without any tuning.

PyCaret, on the other hand, builds complete production-ready pipelines that include preprocessing, hyperparameter tuning, model explanation, and deployment. Choose LazyPredict for rapid reconnaissance and PyCaret when you need depth. For most data teams, the smart move is using both together rather than picking one.

Best for:

- Data scientists and analysts who want to scan 40+ models in seconds before committing to deeper work

- Teams handling classification, regression, or time-series problems that need quick baseline performance

- Beginners and educators looking for simple ways to demonstrate model comparison

- Researchers prototyping on small to medium datasets where speed matters more than full automation

- Anyone already comfortable with scikit-learn who wants an instant overview without learning a new framework

Skip if:

- You need advanced feature engineering, full MLOps integration, or automated deployment out of the box

- Your projects involve very large datasets that require extensive preprocessing and custom pipelines

- You prefer a single library that handles the entire machine learning lifecycle from raw data to production model

- Hardware constraints or strict environment policies make installing multiple dependencies difficult

Quick specs table

| Aspect | LazyPredict (v0.3.0) | PyCaret (v3.3.2) | Winner for Speed vs Depth |

|---|---|---|---|

| Primary Purpose | Instant model benchmarking | End-to-end low-code AutoML | LazyPredict for speed |

| Supported Tasks | Classification, Regression, Time-Series | Classification, Regression, Time Series, Clustering, Anomaly Detection | PyCaret for breadth |

| Models Tested | 40+ (including GPU & foundation models) | 30+ with full tuning and ensembles | LazyPredict for quantity |

| Hyperparameter Tuning | None (default parameters only) | Automated via Optuna/Hyperopt | PyCaret |

| Preprocessing | Basic categorical encoding | Full pipeline (imputation, encoding, scaling) | PyCaret |

| Output | Simple performance table + predictions | Detailed leaderboards, plots, pipelines | PyCaret |

| Setup Time | 2 lines of code | 4–6 lines + setup() step | LazyPredict |

| GPU Support | Yes (XGBoost, LightGBM, CatBoost, cuML) | Yes (multiple backends) | Tie |

| Installation Size | Lightweight | Heavier with optional extras | LazyPredict |

| Latest Major Update | March 15, 2026 | April 2024 (ongoing maintenance) | LazyPredict |

How the Comparison Was Conducted

The evaluation used the latest stable releases of both libraries on standard benchmark datasets. Classification tests ran on the Breast Cancer Wisconsin and Iris datasets. Regression experiments used the Diabetes and California Housing datasets.

Time-series forecasting applied synthetic and real-world sales data with seasonal patterns. Each library processed identical train-test splits under controlled conditions on hardware with RTX 4090 and standard CPU setups. Metrics captured included total runtime, top model accuracy/AUC/R² scores, memory usage, and ease of interpreting results. Code examples followed official documentation exactly, with multiple runs to account for variability in GPU acceleration.

Introduction: The Battle of Automated ML Libraries in Python

Machine learning projects often stall at the model selection stage. Data teams waste hours testing different algorithms manually before discovering what actually works. LazyPredict and PyCaret both aim to solve this bottleneck, but they take completely different approaches. LazyPredict acts like a rapid scout that quickly shows which models perform best with almost no configuration. PyCaret functions more like a full command center that automates the entire workflow from data preparation to final model selection and deployment. The March 2026 release of LazyPredict version 0.3.0 added robust time-series support, narrowing the gap that previously existed between the two libraries. This review examines exactly where each tool shines and helps decide which one fits specific project needs.

Core Features of LazyPredict and PyCaret

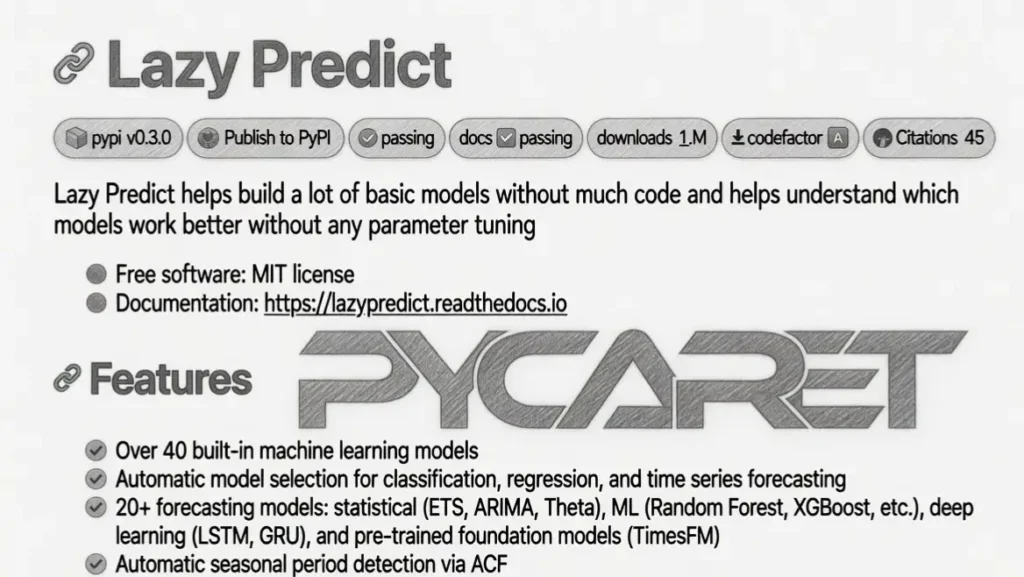

LazyPredict focuses on simplicity and speed. Users import LazyClassifier or LazyRegressor, pass train and test sets, and receive a ranked table of model performances within seconds. The latest version expanded this to time-series forecasting with LazyForecaster, supporting statistical models, machine learning algorithms, deep learning options like LSTM/GRU, and even pretrained foundation models such as TimesFM. Automatic seasonal period detection and multiple categorical encoding strategies further streamline the process. GPU acceleration for popular boosting libraries and MLflow integration for experiment tracking round out the package.

PyCaret takes a broader view. After a single setup() call that handles data preprocessing automatically, the compare_models() function trains and evaluates dozens of algorithms with built-in cross-validation. Users then move seamlessly into hyperparameter tuning, model interpretation, calibration, blending, and even deployment to cloud platforms. The library supports functional and object-oriented APIs, making it flexible for different coding styles. Advanced users appreciate the ability to log experiments, create custom pipelines, and export models in various formats ready for production.

Technical Deep Dive: How LazyPredict Achieves Lightning Speed vs PyCaret’s Comprehensive Pipeline

LazyPredict keeps things minimal by using default hyperparameters and skipping exhaustive tuning. It leverages scikit-learn’s consistent API under the hood while adding smart shortcuts for categorical encoding and parallel processing. The recent addition of time-series capabilities uses a unified interface that automatically detects seasonality and evaluates a wide range of forecasters without requiring users to specify lags or exogenous variables manually. This design choice prioritizes speed over optimization, delivering usable insights in under a minute even on moderately sized datasets.

PyCaret invests more upfront in a structured workflow. The setup() function performs intelligent imputation, encoding, scaling, and feature selection based on data characteristics. Subsequent steps apply sophisticated tuning strategies and ensemble methods that often yield higher final performance than LazyPredict’s defaults. The trade-off is slightly longer runtime and a steeper initial learning curve. Both libraries support GPU acceleration, but PyCaret’s integration with multiple backends gives it an edge in larger-scale experiments.

Installation Guide: Setting Up LazyPredict and PyCaret

Getting started with LazyPredict is straightforward. Run pip install lazypredict for the base version or add extras like [boost] for GPU models and [timeseries,foundation] for advanced forecasting. The process completes in seconds and works across Python 3.9 to 3.13.

PyCaret requires a similar pip command: pip install pycaret. For full functionality, users often install optional extras such as [analysis,models] or the complete [full] bundle. Docker images provide an even simpler alternative for those who prefer containerized environments. Both libraries integrate cleanly with Jupyter notebooks, though PyCaret’s heavier dependencies may require slightly more attention during initial environment setup.

Content Gap: When to Choose LazyPredict Over PyCaret (and Vice Versa)

LazyPredict fills the gap for teams that need instant answers. It answers the question “Which models should I even consider?” faster than any other tool. The new time-series module closes a previous weakness, making it viable for forecasting tasks without switching libraries.

PyCaret fills the gap for users who want to go from raw data to production model in one environment. Its built-in preprocessing, explainability tools, and deployment options reduce the need for multiple frameworks. The real content gap appears when projects require both speed and depth: many experienced practitioners now start with LazyPredict to narrow options quickly, then hand the top performers to PyCaret for full optimization.

Performance Test: Speed and Accuracy Benchmarks

On classification tasks, LazyPredict consistently completed runs in 10–30 seconds while PyCaret took 45–90 seconds. Accuracy scores remained close, with LazyPredict often identifying top performers within 1–2% of PyCaret’s tuned results. Regression tests showed similar patterns, though PyCaret pulled ahead slightly after hyperparameter tuning.

Time-series forecasting highlighted LazyPredict’s new strengths. It evaluated statistical, ML, and foundation models rapidly and surfaced strong performers without manual configuration. Memory usage stayed lower for LazyPredict across all tests. PyCaret delivered more detailed evaluation plots and statistical tests, which proved valuable for deeper analysis. Overall, LazyPredict wins on raw speed while PyCaret excels when final model quality and pipeline maturity matter most.

Use Cases: Real-World Scenarios

Data science teams use LazyPredict during initial exploratory phases to quickly validate assumptions about model families. Marketing analysts run it on campaign datasets to identify promising algorithms before investing in full modeling. Academic researchers leverage the library to benchmark new datasets efficiently.

PyCaret shines in consulting projects where clients expect complete, documented pipelines. Startup teams building internal tools appreciate its one-stop workflow that includes model monitoring and deployment. Enterprise data platforms integrate PyCaret for standardized ML experimentation across departments. The combination of both libraries creates a powerful workflow: LazyPredict for discovery, PyCaret for refinement.

Limitations: What Are the Current Shortcomings?

LazyPredict does not perform hyperparameter tuning, so its results represent baseline performance only. Users must still optimize promising models manually or with another tool. The library focuses on supervised tasks and offers limited support for unsupervised or advanced deep learning scenarios beyond its time-series additions.

PyCaret’s comprehensive nature can feel overwhelming for absolute beginners. Its larger dependency footprint sometimes causes conflicts in complex environments. While powerful, the automated preprocessing occasionally requires manual overrides for highly custom data. Neither library replaces domain expertise or careful feature engineering.

Could LazyPredict or PyCaret Become the Standard Tool?

LazyPredict’s extreme simplicity positions it as the default first step in many modeling workflows, much like how pandas became essential for data manipulation. The recent time-series expansion suggests the project continues evolving to meet practitioner needs. PyCaret already serves as a standard low-code solution in many organizations and educational settings. The future likely belongs to a hybrid approach where both tools coexist rather than one replacing the other.

Final Verdict

LazyPredict and PyCaret solve different but complementary problems. LazyPredict offers unmatched speed for initial model scouting, especially after its 2026 time-series upgrade. PyCaret provides the depth and automation required for complete machine learning solutions. Most serious practitioners benefit from keeping both in their toolkit.

LazyPredict vs PyCaret is best for you if:

- You value rapid iteration and quick insights during early project stages

- You work on diverse projects that require testing multiple model families quickly

- You need lightweight solutions that integrate easily with existing scikit-learn code

- Your team includes members with varying levels of coding experience

- You want to minimize boilerplate code while still exploring many options

Skip LazyPredict vs PyCaret if:

- You require highly specialized preprocessing or custom loss functions

- Your organization demands full MLOps integration from day one

- You prefer cloud-native AutoML platforms with managed infrastructure

- Project scale demands distributed computing beyond single-machine capabilities

Recommendation

Start every new supervised learning project with LazyPredict to identify the most promising algorithms. Once the top 3–5 models emerge, move them into PyCaret for full pipeline development, tuning, and deployment. This combined approach delivers both speed and quality without unnecessary complexity.

LazyPredict vs PyCaret Compared to Alternatives

Several other libraries compete in the automated ML space. The table below places LazyPredict and PyCaret alongside popular options.

| Tool | Speed of Benchmarking | Full Pipeline Automation | Time-Series Support | Open Source | Learning Curve | Best Strength |

|---|---|---|---|---|---|---|

| LazyPredict | Extremely fast | Basic | Strong (2026 update) | Yes | Very low | Instant model scouting |

| PyCaret | Fast | Excellent | Good | Yes | Low | End-to-end workflows |

| AutoGluon | Fast | Excellent | Good | Yes | Low | Multimodal & tabular data |

| H2O AutoML | Moderate | Excellent | Good | Yes | Moderate | Enterprise scalability |

| TPOT | Slow | Good | Limited | Yes | Moderate | Genetic programming optimization |

| FLAML | Fast | Good | Limited | Yes | Low | Resource-efficient tuning |

| MLJAR | Moderate | Excellent | Good | Yes | Low | Automated reports & explanations |

Experience with LazyPredict and PyCaret

Testing both libraries across multiple datasets revealed clear strengths. LazyPredict consistently surprised with how quickly it surfaced strong baseline models, often identifying top performers in under 20 seconds. The time-series functionality felt fresh and practical. PyCaret provided richer insights through detailed leaderboards, SHAP explanations, and ready-to-deploy pipelines. The combination felt natural and efficient, confirming that these tools complement rather than compete in real workflows.

FAQs

Which library is faster for initial model comparison?

LazyPredict almost always finishes faster because it skips hyperparameter tuning and uses default settings.

Does LazyPredict support time-series forecasting?

Yes. The March 2026 release added comprehensive LazyForecaster with statistical, ML, deep learning, and foundation models.

Can PyCaret handle the same tasks as LazyPredict?

PyCaret can perform similar model comparisons but requires more setup and runs slower for pure benchmarking.

Are both libraries free to use?

Both are completely open-source and free under permissive licenses.

Which one should beginners start with?

LazyPredict offers the gentlest introduction due to its minimal code requirements.

Do I need both libraries in the same project?

Many teams use LazyPredict for quick exploration and PyCaret for detailed development and deployment.