Highlights:

- Anthropic CEO Dario Amodei predicts that rival AI companies will catch up to the capabilities of **Claude Mythos Preview** within just a few months.

- Claude Mythos Preview released on April 7, 2026, achieved a record **93.9%** on SWE-bench Verified, leading in coding, reasoning, and cybersecurity benchmarks.

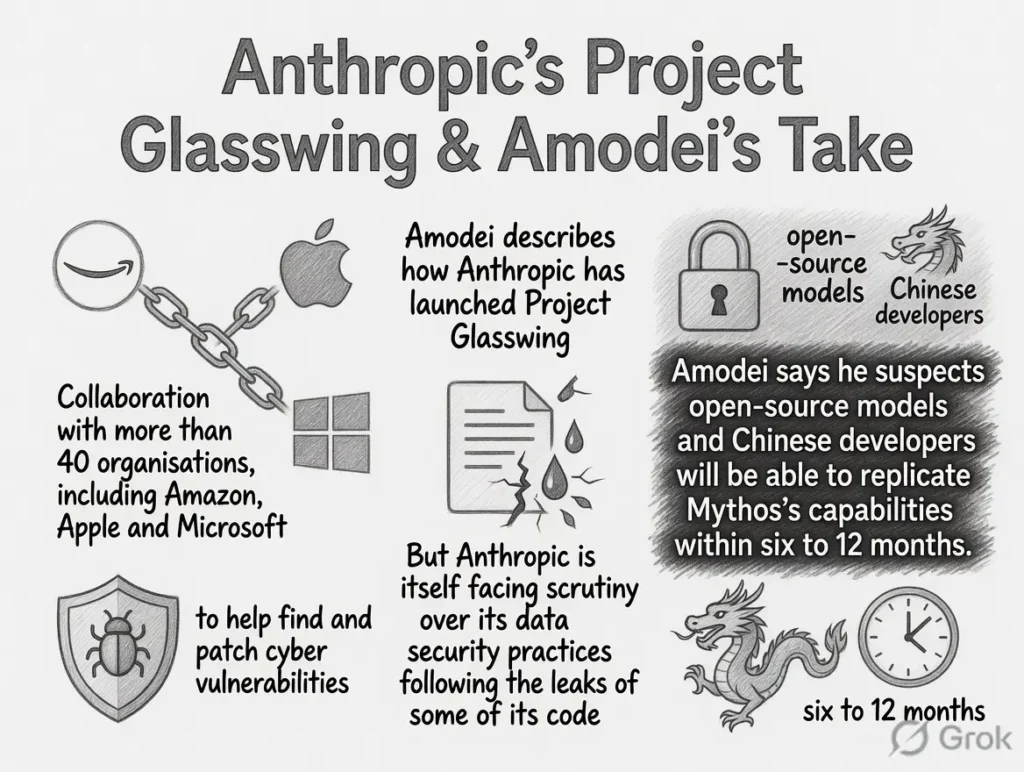

- The model is not publicly available yet. It is being shared only with select partners through **Project Glasswing** to help find and fix critical security vulnerabilities.

- Amodei emphasized the relentless pace of AI progress with the phrase “There’s no end to the rainbow,” highlighting how fast the field is moving.

- Chinese models like Kimi K2.5 and GLM-5 are rapidly closing the gap, raising important questions about global competition and security.

I recently came across Anthropic CEO Dario Amodei’s latest comments, and I have to say they left me thinking hard about how quickly everything is changing in AI. He openly predicted that other companies will match the impressive abilities of Claude Mythos Preview in just a few months.

When I dug into the details, I found this model stands out for very practical reasons. Released on April 7, 2026, Claude Mythos Preview scored an incredible 93.9% on the tough SWE-bench Verified benchmark. That is a massive jump from previous versions and puts it ahead in coding, advanced reasoning, and especially cybersecurity tasks.

Claude Mythos Preview excels at spotting hidden weaknesses in software. Through simulations in Project Glasswing, it has already uncovered thousands of potential zero-day vulnerabilities across major systems. I believe this dual-use nature is exactly why Anthropic chose not to release it openly.

Instead, they are giving controlled access to around 40 carefully selected companies, including big names in tech and finance, so they can strengthen their defenses before bad actors catch up.

Here is a quick look at how Claude Mythos Preview performs compared to its predecessor:

- SWE-bench Verified: 93.9% (previous best around 80.8%)

- SWE-bench Pro: 77.8% (previous around 53.4%)

- Terminal-Bench 2.0: 82.0% (previous around 65.4%)

These numbers show real leaps in agentic coding and practical software engineering skills.

Amodei also stressed the unstoppable speed of AI development. He famously said, “There’s no end to the rainbow,” reminding us that progress does not slow down. I see this as both exciting and a bit sobering. While the US is pushing for collaboration between government and industry, models from China like Kimi K2.5 and GLM-5 are narrowing the gap fast. This creates real pressure on security and policy decisions.

I have been following these developments closely, and what strikes me most is how Claude Mythos Preview forces everyone to think differently about responsible AI deployment. The model is powerful enough to act as both a defender and a potential threat, which is why controlled rollout through Project Glasswing makes sense right now.

In my view, this rapid catch-up cycle means developers, cybersecurity teams, and companies cannot afford to wait. They should start preparing their systems for stronger AI-assisted coding and vulnerability hunting tools that will soon become widely available.

What will happen next is clear to me. Within months, we will likely see similar high-performing models from multiple labs entering the market. This will accelerate innovation in software development and cybersecurity, but it will also raise the stakes for digital safety. Professionals in these fields should focus now on upskilling with AI tools, auditing their current infrastructure, and building stronger human-AI collaboration workflows.

Overall, I feel this moment marks another turning point. The pace Amodei described is real, and staying ahead will require continuous learning and smart, responsible adoption of these powerful new capabilities.

FAQs

What is Claude Mythos Preview?

It is Anthropic’s latest frontier model released on April 7, 2026, that leads in coding and cybersecurity benchmarks but is currently available only to select partners.

Why is Claude Mythos Preview not publicly released?

Due to its strong ability to discover vulnerabilities, Anthropic is limiting access through Project Glasswing to help companies fix security issues first.

How good is Claude Mythos Preview at coding?

It achieved 93.9% on SWE-bench Verified, significantly higher than previous models, showing excellent performance in real software engineering tasks.

What did Dario Amodei mean by “There’s no end to the rainbow”?

He used the phrase to highlight that AI progress is continuous and accelerating, with no final stopping point in sight.

How should companies prepare for models like Claude Mythos?

Companies should audit their systems for vulnerabilities, train teams on AI-assisted coding, and explore secure ways to integrate advanced models into their workflows.