When I checked the latest numbers from Alibaba, one thing stood out immediately. The new Qwen3.6-27B proves that smart design beats raw size in real-world coding tasks.

Released on April 21, 2026 under the fully open Apache 2.0 license, this dense model delivers flagship-level performance while staying compact and accessible.

Why Smaller Can Be Better in AI Coding

Most teams chase bigger parameter counts for better results. Yet Qwen3.6-27B flips that script. With only 27 billion parameters, it beats Alibaba’s own much larger Qwen3.5-397B-A17B MoE model across every major coding benchmark.

This shows focused training and architecture choices can deliver more efficient intelligence than simply scaling up.

Benchmark Performance That Turns Heads

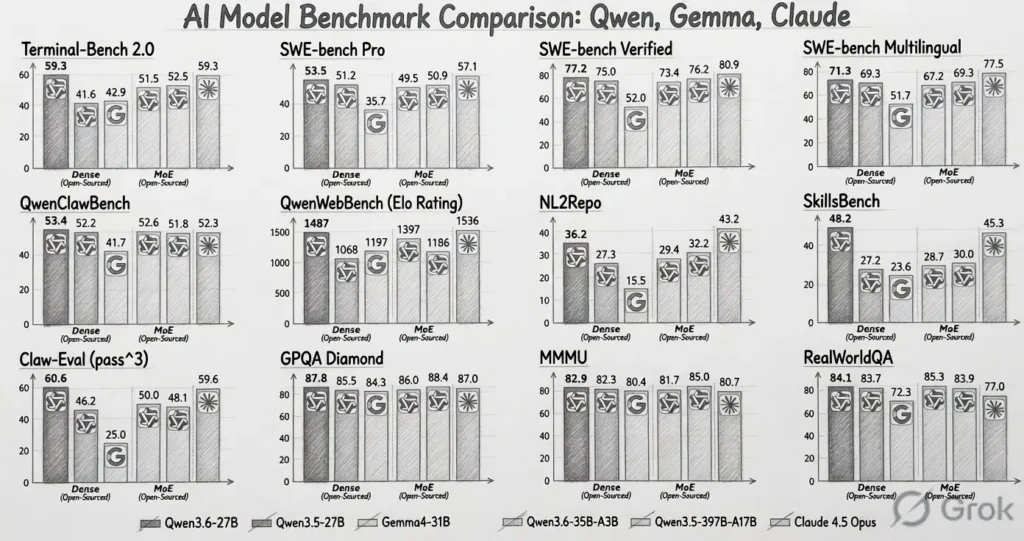

The scores speak for themselves. On SWE-bench Verified, the model hits 77.2%, surpassing the 397B model’s 76.2%. It also matches top closed models on several agentic tasks. Here is a clear side-by-side view of the key results:

| Benchmark | Qwen3.6-27B | Qwen3.5-397B-A17B | Claude 4.5 Opus |

|---|---|---|---|

| SWE-bench Verified | 77.2% | 76.2% | 80.9% |

| SWE-bench Pro | 53.5% | 50.9% | 57.1% |

| Terminal-Bench 2.0 | 59.3% | 52.5% | 59.3% |

| SkillsBench Avg5 | 48.2% | 30.0% | – |

These gains come from a hybrid architecture that blends advanced attention mechanisms with smart optimization. Developers now get near-frontier coding power without needing massive infrastructure.

Multimodal Power and Massive Context

Qwen3.6-27B handles text, images, and video in one unified model. It offers a native 262,000-token context window that supports long codebases or detailed documents. Users can also switch between thinking and non-thinking modes depending on the task. This flexibility makes the model practical for everything from quick code suggestions to deep multimodal analysis.

Easy Deployment on Everyday Hardware

One of the biggest wins is accessibility. Thanks to tools like Unsloth, enthusiasts can run the full model on GPUs with just 18GB VRAM. This opens the door for local development, privacy-focused teams, and smaller companies that cannot afford expensive cloud setups. The compact size combined with strong performance creates a rare balance that many developers have been waiting for.

What Changes Next for Coders and Teams

I see this release accelerating the shift toward efficient local AI. What will happen is more developers will adopt open models for daily workflows instead of relying solely on cloud APIs. What will change is the cost and speed of building AI-assisted coding tools. This will affect small teams and indie hackers the most by giving them professional-grade capabilities at low cost.

Professionals should start testing Qwen3.6-27B now on their current projects. Experiment with the multimodal features for code documentation or image-based UI tasks. Those who integrate it early will gain a clear productivity edge as the model ecosystem grows.

Overall, Alibaba has delivered a model that redefines expectations at the 27B scale. It proves powerful coding AI can be open, efficient, and ready for real use today.

FAQs

What makes Qwen3.6-27B different from previous Qwen models?

It is a dense 27B model that outperforms Alibaba’s own larger 397B MoE model on key coding benchmarks while adding native multimodal support and a huge context window.

Can I run Qwen3.6-27B on consumer hardware?

Yes. With quantization and tools like Unsloth, it runs smoothly on GPUs with 18GB VRAM, making it ideal for local setups.

Is the model fully open-source?

Absolutely. It uses the Apache 2.0 license, allowing commercial use and full customization without restrictions.

How does it compare to Claude 4.5 Opus?

It matches Claude on Terminal-Bench 2.0 and comes very close on several coding metrics while being completely open and much cheaper to run locally.

What should developers do first with this model?

Download the weights from Hugging Face, test it on your existing coding workflows, and explore the switchable thinking modes for different task types.