OpenAI dropped Privacy Filter on April 22 as its first open-weight model of 2026.

The move gives developers a practical tool to automatically spot and remove sensitive personal information before it ever reaches training data or user-facing systems.

Privacy Filter runs entirely locally in browsers or on regular devices. This design solves a growing headache for teams that want strong privacy without sending data to the cloud.

What Is Privacy Filter and Why It Matters

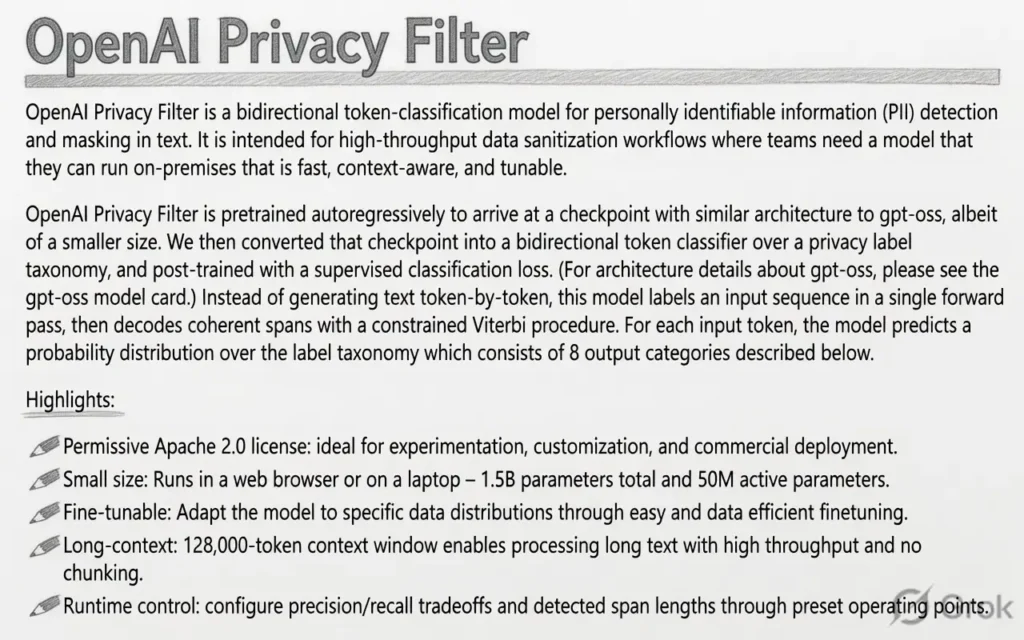

The model weighs in at 1.5 billion total parameters but activates only 50 million at any time thanks to a smart Sparse Mixture-of-Experts setup. It handles up to 128,000 tokens in one pass, making it suitable for long documents or chat logs.

OpenAI released it under the permissive Apache 2.0 license. Developers can download, modify, fine-tune, and even ship commercial products built on it without restrictions.

The model identifies and redacts eight categories of personally identifiable information. It works across text from emails and customer support logs to code snippets containing secrets.

Key Capabilities That Stand Out

Here is exactly what the model can detect and mask:

- Emails, phone numbers, and physical addresses

- Names and bank account details

- API keys, passwords, and secret credentials

- IP addresses and other location data

It achieves a state-of-the-art 96 percent F1 score on the PII-Masking-300k benchmark. On a corrected version of the same test, the score climbs to 97.43 percent. Precision and recall both stay impressively high, which means fewer missed items and fewer false alarms.

OpenAI also ships a simple command-line interface for fine-tuning. This lets teams adapt the model quickly to their own data types or stricter internal policies.

How Developers Can Start Using It Today

Because it runs locally, Privacy Filter fits perfectly into existing workflows. You can drop it into web apps, desktop tools, or backend pipelines without extra cloud costs or latency.

The internal version that OpenAI uses itself proves the model already works at scale. Now the community gets the same technology to experiment with and improve.

For anyone concerned about data leaks during AI training or inference, this tool offers an easy first line of defense. It keeps sensitive details out of datasets while preserving the rest of the text for useful processing.

What This Changes for Privacy in AI

This release feels like a real step toward building safer AI by design. What will happen is that more teams will add automatic redaction as a standard step in their pipelines. What will change is the speed and simplicity of protecting user data, no more complex custom scripts or expensive third-party services.

It will affect developers building chatbots, customer support tools, and enterprise apps the most. Professionals should start testing Privacy Filter now on sample datasets and fine-tune it for their specific use cases. The earlier you integrate it, the faster you can ship privacy-first features that users actually trust.

Overall, I see this as OpenAI giving the community a practical building block for responsible AI development. The preview invites feedback, so the next versions could become even stronger. For now, this model makes it genuinely easier to keep sensitive information private while still moving fast on AI projects.